Ready to make incident response your competitive advantage?

See how Uptime Labs builds provable, scalable incident response capability across your organisation.

Note: This article was initially published on 13th May 2024. This is the updated version.

What if Everyone Left?

In ‘The World Without Us’, author Allan Weisman explored what would happen to the planet if humans were to suddenly disappear.

Within a few short days, the power grid and infrastructure would start to fail. New York’s Subway would flood following the failure of the pumps controlling groundwater. Within weeks, the planet’s 400+ nuclear power stations would start to melt down, creating lakes of radioactive lava, rendering the surrounding areas uninhabitable to most remaining species for centuries.

Over millennia, the planet would recover, eventually thriving, but it’s fair to say that it’d be a bumpy ride.

Meanwhile, Back at the Office…

How long might your technology systems continue to run if the supporting staff weren’t there?

No need for a cataclysm or lava lake required - a badly managed org restructure, or an unfortunate breakdown in vacation planning, would do it.

How long?

A day? A week? A month?

Anyone taking this question seriously might glance at their recent MTBF (mean time between failures) figures, potentially gaining confidence from the historical irregularity of serious incidents. Infrequent downtime is great news; it indicates that the socio-technical system, comprising people, their relationships, technology, and the relationships between people and technology, is, at the very least, keeping the lights on under prevailing market conditions.

But how ‘hands off’ exactly are those lights? Are they confidently shining day and night, week by week, month by month? Or do they require the constant supervision of a team of skilled people, attentive to every flicker: prodding, probing and administering treatment as if the lights were on life support?

It’s likely that your systems are more similar to the latter than the former. That’s not to say you’re doing badly, it’s just to recognise that more often than not, complex systems run in degraded mode. This makes intuitive sense. We’re all familiar with the bugs in production, the ticking time bombs, the 'explodey bits,' and the systems that require close supervision and frequent intervention to prevent them from going off.

However, these continual efforts are easy to miss. David Woods put it best in his Law of Fluency, which states, “Well-adapted cognitive work occurs with a facility that belies the difficulty of resolving demands and balancing dilemmas. The adaptation process hides the factors and constraints that are being adapted to or around.”

In other words, your people are good. Your people are so good that their critical activities to keep systems running frequently aren’t noticed, and even if you did notice, they’d look like nothing! And what do you do when your systems stay up and you don’t notice that anything is wrong? That’s right, nothing! And therefore, what do you learn…?

Every once in a while, despite best efforts, systems will fail, customers will be impacted, perhaps a root cause analysis will take place, and learning will commence. The commitment to learning is likely proportional to the impact of the incident, with the greatest commitment reserved for the gravest of impacts. This is totally understandable, but ultimately undesirable if the goal is learning, reliable systems and uptime.

Resilience as a Verb

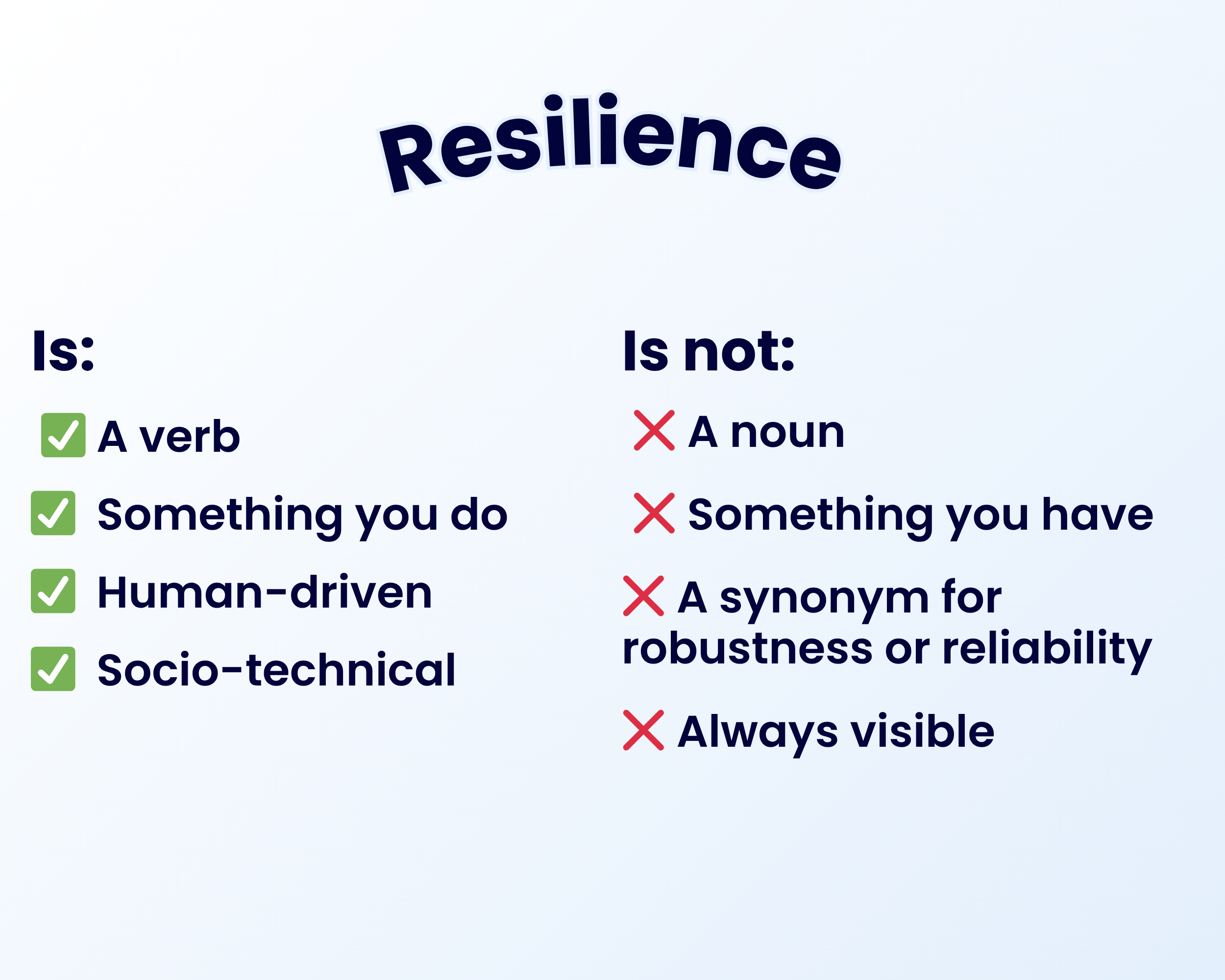

Erik Hollnagel, one of the founders of resilience engineering, stated that ‘Resilience is something you do, not something you have'. David Woods refined this idea further, reframing the word ‘resilience’ as a verb rather than a noun.

We often colloquially think of ‘resilience’ as a synonym for ‘robustness’ or ‘reliability’, but whereas these nouns represent static properties of a system, resilience is more dynamic, concerning how a system adapts in the face of strain or adversity.

If you think of resilience in this way, you can start asking the question, “What resilience are we doing?” Simply asking this question encourages you to notice both the visible and hidden activities that happen every day. These activities nurture adaptability and help the organisation flex around the constant challenges it faces.

Practices for Strengthening Resilience

If you can surface what’s actually going on, rather than keeping it hidden, you have a chance of improving your capacity to adapt. That adaptability will serve you well when systems become stretched.

So what kind of things might you do to encourage this way of thinking? Here are some ideas, which I’ve sorted into three categories: maximising the value of postmortems or PIRs, fostering a strong incident response culture, and providing general or miscellaneous advice.

Making the Most out of Post-Mortems/Post Incident Reviews (PIR)s

- Study near misses. What did happen that meant an incident didn’t happen?

- Create measurements/KPIs that encourage the reporting of issues rather than the suppression of issues (e.g. don’t have a ‘number of incidents’ KPI where low = good and high = bad, as you’ll end up with more incidents but fewer reports)

- Talk to practitioners rather than just managers; practitioners really know what's going on.

- Document incidents as compelling stories that people will want to read or listen to and share

Building a Strong Incident Response Culture

- Practice incident response, rather than just learning about it theoretically. How can you do this? Try immersive incident response simulations that replicate the same level of urgency as real incidents.

- Approach low-impact incidents with the same commitment to learning as high-impact ones. Consistent attention to lower-impact incidents can help your organisation build a culture of continuous improvement.

- Run periodic resilience retrospectives, especially when serious incidents haven’t occurred.

- Use on-call handovers to surface interesting and uninteresting things that happened. If this is something that interests you, I would recommend Chad Todd’s paper, ‘Handover Communications in Software Operations’.

General Advice

- Make friends with your customer service people. They’ll tell you about issues that never appeared in your observability systems.

- Give folks with an interest in resilience engineering the opportunity to rotate around different teams, sharing their knowledge and learning from others.

- Implement processes that facilitate fast, safe change, such as continuous delivery. Continuous deployment & delivery not only reduces the blast radius of any single change, but also builds organisational confidence in releasing frequently.

What else? We’re interested in hearing about what you do.

Here’s to the Humans

Regardless of where such activities occur in your organisation, these activities ARE your resilience in action. What's more, they’re mostly human in nature.

Yes, you’ve doubtless got technological redundancy, failover and fault tolerance, but resilience is in your people, and it’s probably hidden. In these times when cost is under scrutiny and faith is being placed in automation and AI, it's more important than ever to discover and recognise the vital role your staff play in your organisation’s resilience.

With that in mind, how long might your technology systems continue to run if the supporting staff were to leave?

Maybe not as long as you’d think.

.png)